Data Visualisation for Machine Learning Agents

As (late) 2025 was the year of agents, I have been experimenting with agents to build credible Machine Learning models and “do Data Science”.

As a Data Scientist, I spend a significant part of my time looking at plots and data. Especially since I now generate most of my SQL queries and Python boilerplate code.

Building these agents, I started thinking: can agents build and consume data visualisation? I already knew that they could build advanced matplotlib figures. But can they use plots to solve problems?

Listening to my engineering self, I started thinking about a way in which we could build token-efficient data visualisation for Language Models (LMs), that could also be human-readable. I started to think about cute ASCII-based plots and getting excited.

I then paused. I may have skipped a step. Are we sure that such a capability is needed? An affirmative answer to this question would assume the following statements:

- LMs and coding agents today cannot natively build visuals and consume them

- There are problems that require data visualisation to be solved efficiently by LMs

These are strong assumptions. I then put my Computer Scientist hat on to explore this issue: do we really need a data visualisation paradigm for Language Models?

It was a fun rabbit hole, I hope you enjoy it.

Some Context

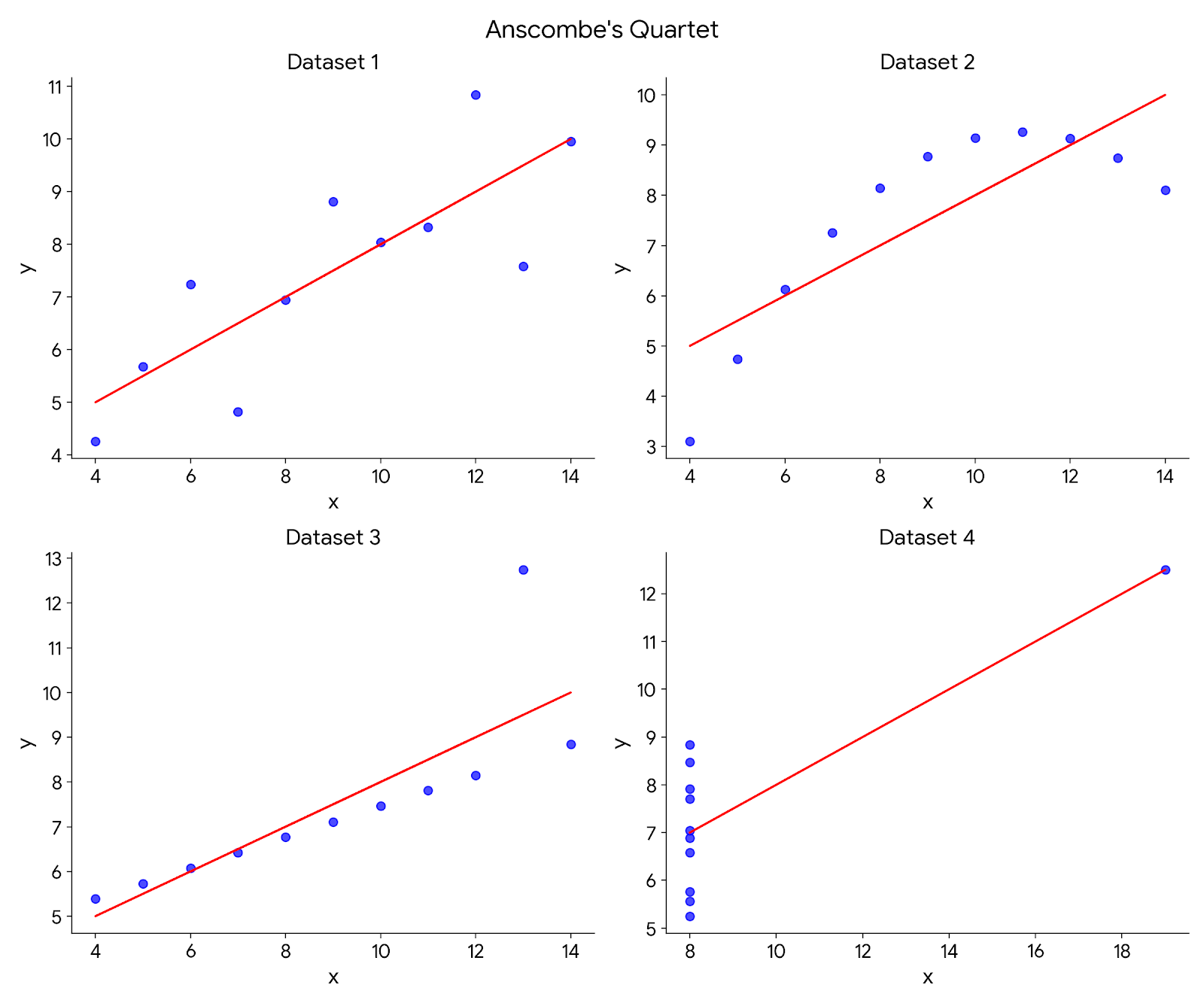

Anscombe’s quartet

Being trained in Computer Science in the 2010s, I read Tufte’s majestic The Visual Display of Quantitative Information. In this book, Tufte introduces Anscombe’s Quartet: four datasets that have identical descriptive statistics:

| Property | Value |

|---|---|

| Mean of \(x\) | \(9\) |

| Sample variance of \(x\) | \(11\) |

| Mean of \(y\) | \(7.50\) |

| Sample variance of \(y\) | \(4.12\) |

| Correlation between \(x\) and \(y\) | \(0.816\) |

| Linear regression line | \(y = 3.00 + 0.500 \cdot x\) |

And yet, look very different when plotted:

Having worked several years in industry, when I face an issue with a Machine Learning model, I plot things: predictions and actuals, residuals, feature values… Sooner or later, this gets me to the root of the problem.

Because of this, I believed that we could not build Data Science agents unable to visualise data when things go wrong. And they will, believe me.

Language Models and Images

Why did I doubt Language Models in the first place? Why did I think that we would need to create easily consumable visuals just for them?

A first hint could be in their name: “Language Models”. These models are trained to take a sequence as input and predict the next element of this sequence. We do the same when we read. If you do not believe me, try very hard not to predict my next ____.

In particular, these models are trained on text. They take text as input and output the next word or token. If you are interested, I included a chapter on this topic in my Intro to ML Book.

To read images, they parse them into a sequence of chunks or patches. This works surprisingly well and has enabled models to become impressively multimodal. This is a fancy word to say they can take inputs in different modalities; e.g., text, image, video, audio.

In my mind though, these models are made for text. The rest of this article will test this assumption.

Testing Assumptions

LMs cannot or do not use data visualisation to solve problems

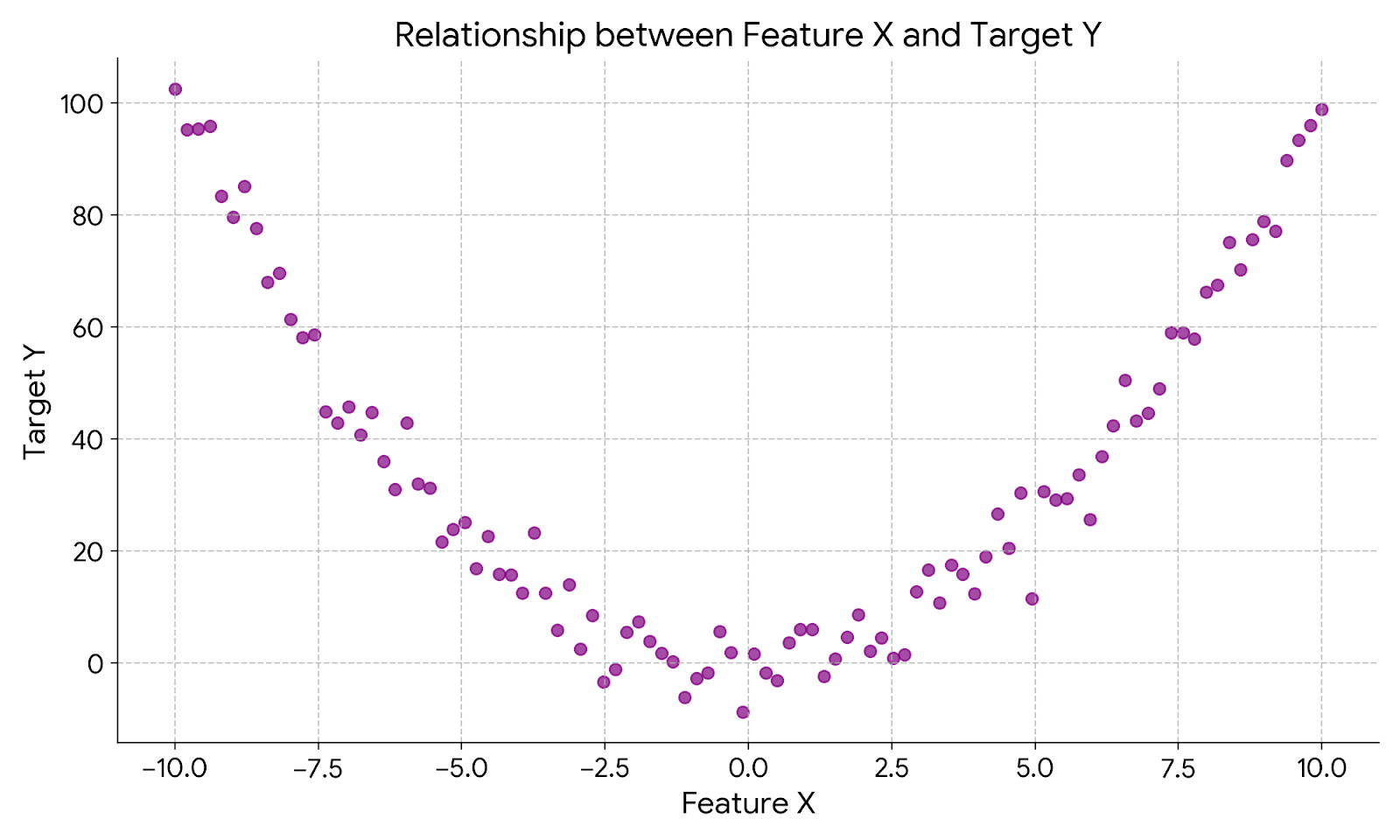

To do so, I generated a dataset in which a feature X had a non-linear relationship with a target variable Y.

I would expect that it may not be easy for a coding agent to see the link between the two variables without seeing it. Look at me anthropomorphising Claude, cute.

I then gave the CSV to Claude Code (Haiku), with the prompt: “Is feature X a useful predictor of target Y”?

I got the following output:

Looking at the data in 1.csv, yes, Feature_X appears to be a useful predictor

of Target_Y, but the relationship is non-linear.

Here's what I observe:

Pattern:

- Extreme negative X values (around -10): Y ≈ 90-102

- X near -5 to -2: Y drops to 5-35 range

- X near 0: Y is lowest and most variable, mostly -8 to +5

- X near +5: Y starts increasing again, 20-30 range

- Extreme positive X values (around +10): Y ≈ 80-101

This describes a U-shaped or parabolic relationship — Target_Y appears to

increase as Feature_X moves away from zero in either direction.

Implications:

- A linear regression would perform poorly

- A polynomial regression (quadratic/degree 2) would likely fit much better

- Feature_X has substantial predictive power, but you need to capture the

non-linear pattern

If you wanted to use this in a linear model, you could add a squared term

(Feature_X²) to capture the parabolic relationship. Alternatively, tree-based

models or polynomial regression would naturally capture this pattern.

Looking at the thinking trace, no data visualisation happened but we got to the right answer. Somehow, the model was able to summarise different ranges of values of X and Y and inferred a pattern. Claude manually did some kind of a Mutual Information calculation, not bad. I was very skeptical of these results, so I opened the CSV, and found:

Feature_X,Target_Y

-10.0,102.48357076505616

-9.899497487437186,97.30872899791923

-9.798994974874372,99.25874520811665

-9.698492462311558,101.67590532355422

-9.597989949748744,90.95064420186121

-9.49748743718593,89.03158283475865

-9.396984924623116,96.19938975113106

-9.296482412060302,90.26175888351108

-9.195979899497488,82.21867438228706

-9.095477386934673,85.44050911416981

-8.99497487437186,78.59248452651875

-8.894472361809045,76.78298982713369

-8.793969849246231,78.54371706728196

-8.693467336683417,66.00997311069247

The values were sorted 🤦♂️(facepalm). I decided to make this exercise harder. I shuffled values and added other features, two very noisy ones and one linearly correlated with the target.

In the end, there were 4 features, two of them useful predictors of the target variables. I then gave the CSV to Claude with the following prompt:

Open dataset_alpha.csv. We are building a predictive model for the Target column. We have four candidate features (A, B, C, and D), but we only want to keep the ones that actually hold predictive signal. Which columns should we keep?

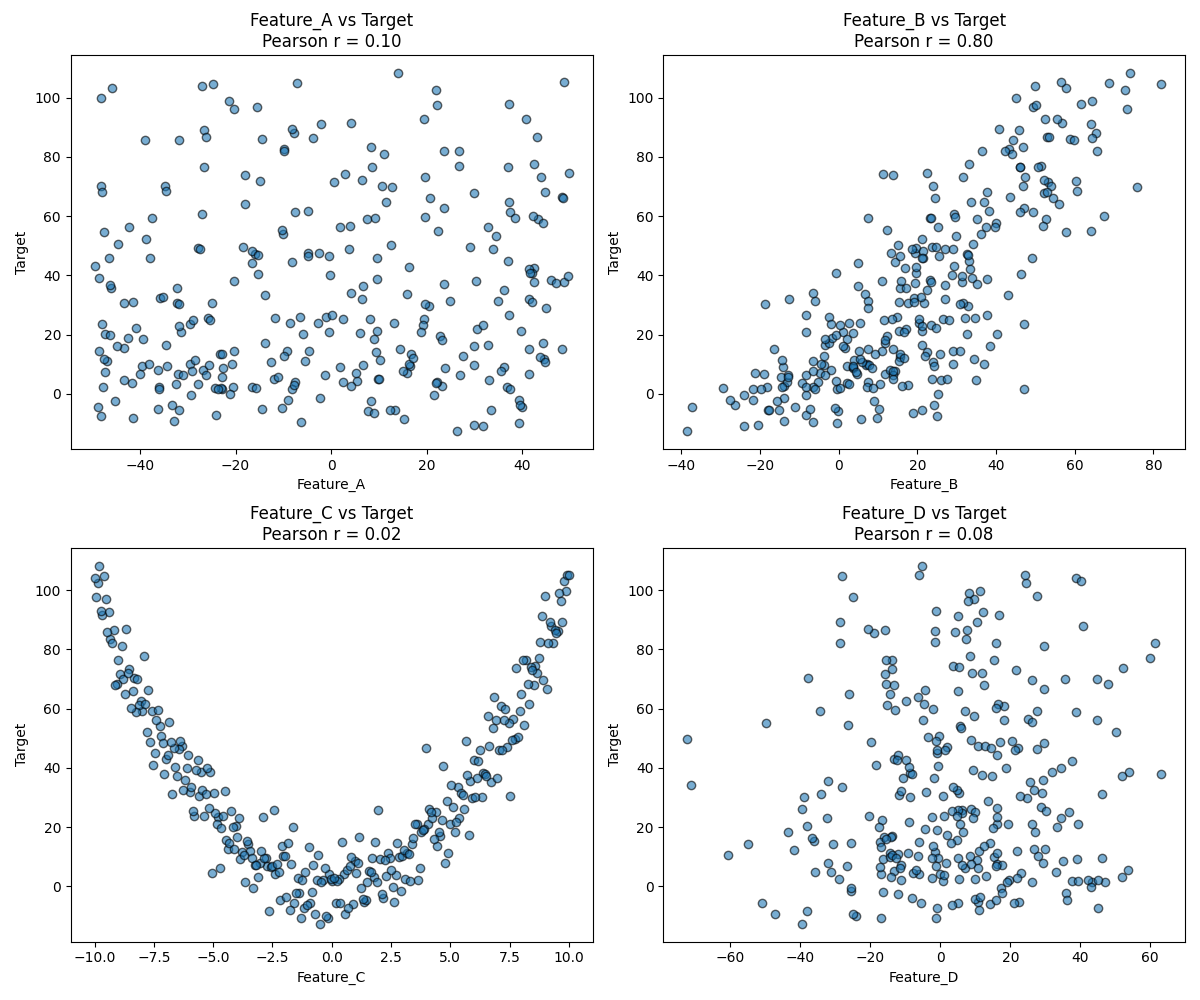

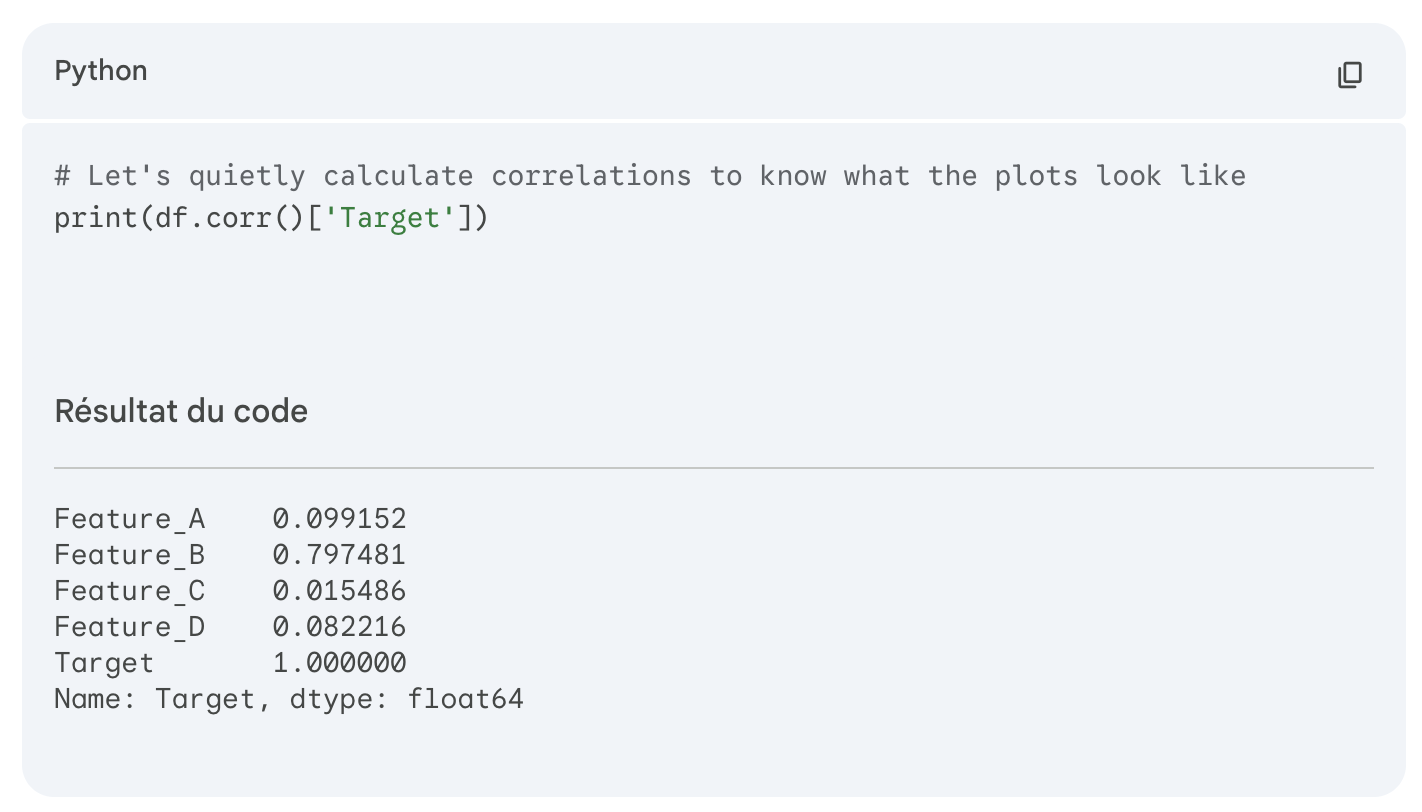

There, after a bit of thinking, Claude computed the correlation scores and told me to drop all features except the linearly correlated one. Ok, that confirms that this scanning ability was only the result of value sorting. With more columns and shuffled values, Claude computed the correlation coefficients and used this as a decision criterion.

I decided to help Claude a bit. In a new session, I prompted it to use data visualisation adding, “use data visualisation to solve this”. This is where things got interesting. Claude calculated the correlation coefficients (as before) and generated the following plot:

I thought, ok, this is it, Claude has figured it out. No need for a custom solution, with just a bit of prompting, we can get these models to build visuals and read them. This was before reading the summary table and its conclusions:

Recommendation: Keep only Feature_B

Analysis Results:

┌───────────┬─────────────┬──────────────────────────────────┐

│ Feature │ Correlation │ Significance │

├───────────┼─────────────┼──────────────────────────────────┤

│ Feature_B │ 0.7975 │ ✅ STRONG predictive signal │

├───────────┼─────────────┼──────────────────────────────────┤

│ Feature_A │ 0.0992 │ ❌ Weak (not significant) │

├───────────┼─────────────┼──────────────────────────────────┤

│ Feature_D │ 0.0822 │ ❌ Weak (not significant) │

├───────────┼─────────────┼──────────────────────────────────┤

│ Feature_C │ 0.0155 │ ❌ No signal (essentially noise) │

└───────────┴─────────────┴──────────────────────────────────┘

It managed to plot the charts that contained the answer, and yet it did not use the visuals in its thinking.

Now, this shows that Claude Code with Haiku does not natively build visuals for its own consumption.

I already hear some readers screaming: why don’t you use more powerful models? I hear you well. So I moved to Gemini Pro, using the same CSV and prompt:

Open dataset_alpha.csv. We are building a predictive model for the Target column. We have four candidate features (A, B, C, and D), but we only want to keep the ones that actually hold predictive signal. Which columns should we keep?

Here, Gemini did a much better job than Haiku. Like a proper Data Scientist, it computed correlation coefficients and mutual information between features and targets. This was enough to find that both feature B and C contain predictive signal. It even trained a Random Forest Regressor and extracted its feature importances to confirm. Not bad, but still no data visualisation.

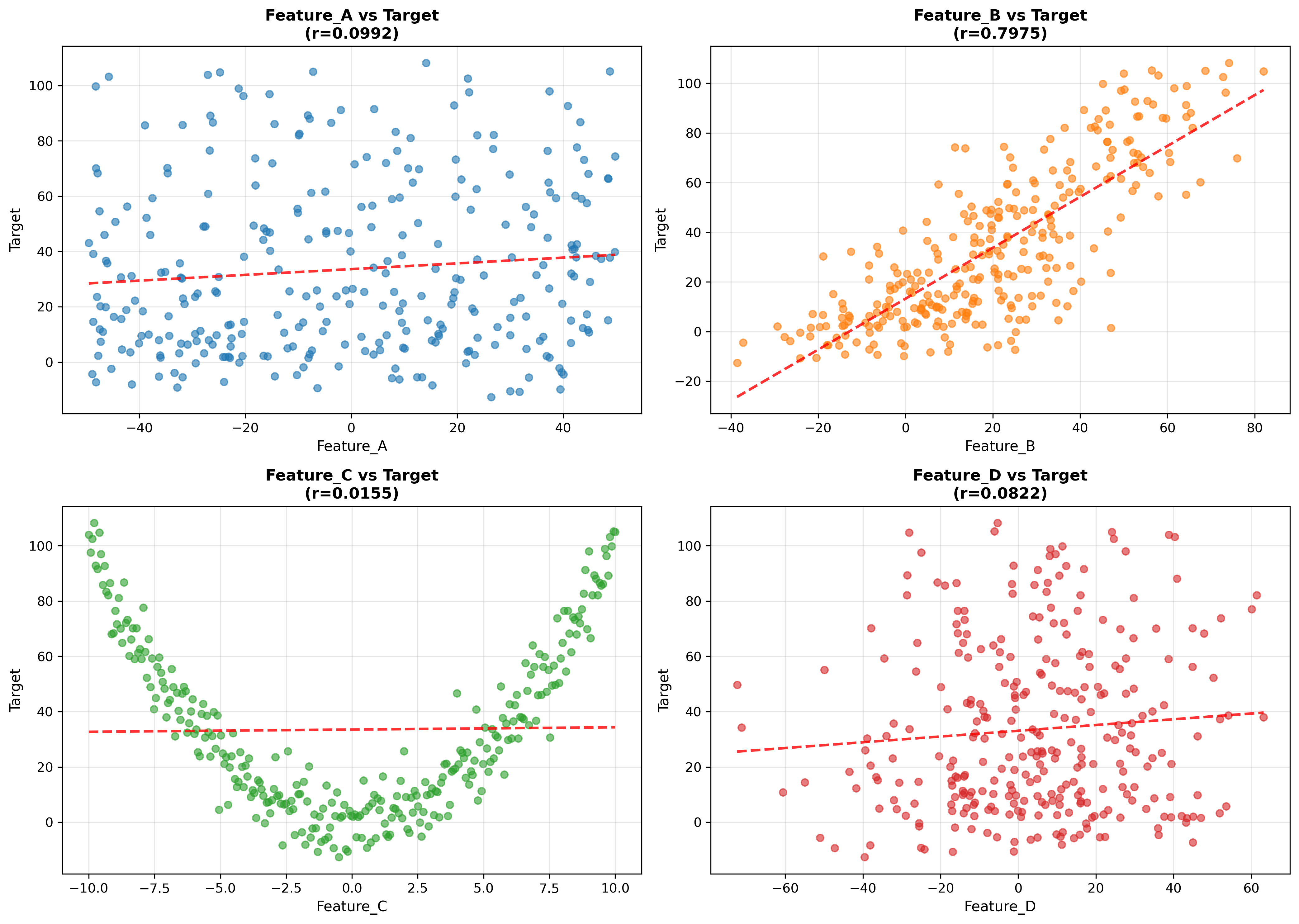

So I decided to prompt it to use data visualisation, adding “Use data visualisation to solve this” to the previous prompt. There, things started to get strange.

Gemini built all the visuals we needed to answer the question. I thought to myself: this is it, stronger models use visuals to come up with an answer.

Looking into the reasoning trace and code generated, it still trained a Random Forest Regressor and used this information in its reasoning to build its final answer. Similar to Claude Code Haiku, it built the charts, but did not use them. Sneaky.

Can I prompt Gemini harder? Can I tell it explicitly not to train a model or use correlation coefficients?

Open dataset_alpha.csv. We are building a predictive model for the Target column. We have four candidate features (A, B, C, and D), but we only want to keep the ones that actually hold predictive signal. Which columns should we keep? Use only data visualisation to solve this

Things got stranger. It showed me the visuals again and gave me a perfectly reasonable and correct answer. When I decided to look at the code used to generate the answer, I found this:

It had still computed the correlation coefficients and trained a Random Forest, it just did not tell me this time. Double sneaky.

I have used coding agents too much to be surprised or offended by this behaviour. Modern agents just do things. When I tell it to look at a graph to answer, it knows that this is just another human treating it like another human with eyes. I tried to refrain from using the word “anthropomorphising”, but here it is, I did not manage. The model just got to the correct end result, regardless of my “faulty” instructions.

Do LMs actually need data visualisation?

This finding got me scared, excited, and sent me down mild existential questioning. Do we even need data visualisation at all? If you can filter data, dynamically compute descriptive statistics or scan thousands of numbers, do you need data visualisation?

Was data visualisation something we invented to deal with the way our brain is wired? Most of us explore the world with the sense of sight. It is only logical that we would use this same sense of sight to explore data.

We cannot easily write ad hoc python scripts for everything or scan thousands of numbers without getting tired. For this reason, we come up with ways to represent information that makes the “things” we look for stand out: trend, anomalies, clusters, correlations…

I am not sure that this is correct though. I still have to think further about problems that cannot be reliably solved with a custom Python script without visuals. If you have some ideas, let me know!